OpenAI sued by families of school shooting victims in Canada's Tumbler Ridge

Several families of victims of a mass shooting in Canada earlier this year are suing OpenAI and its CEO, Sam Altman, alleging the company's generative AI chatbot, ChatGPT, played a role in the February shooting and that the company should have taken steps to prevent it.

"The Tumbler Ridge attack was an entirely foreseeable result of deliberate design choices OpenAI made with full knowledge of where those choices led," the seven suits filed in federal court in San Francisco on Wednesday claim.

The lawsuits claim the shooter had extensive conversations spanning multiple days about scenarios involving gun violence. Few details about the chats have been made public so far.

Police said the shooter, identified as 18-year-old Jesse Van Rootselaar, killed five students and a teacher, as well as two family members at home, and died of a self-inflicted gunshot wound in the rampage on Feb. 11.

Police said the shooter had previously been held under British Columbia's Mental Health Act, which allows police to detain someone experiencing a mental health crisis that might need treatment. The complaints allege authorities had also temporarily removed firearms from the shooter's home.

OpenAI has previously acknowledged that it banned Van Rootselaar's ChatGPT account last June — eight months before the shooting — for violating its usage policies. The company told CBS News the account was flagged by the company's automated abuse detection tools and human investigators.

Last week, Altman issued an apology letter to the small community of Tumbler Ridge, in British Columbia, for not alerting law enforcement to the ChatGPT account of the shooter. "I am deeply sorry that we did not alert law enforcement to the account that was banned in June," Altman said.

In February, OpenAI told CBS News it had weighed whether to alert law enforcement about the account, but concluded that the account did not pose any credible risk of serious physical harm, and thus did not meet the threshold for referral.

But the lawsuits filed this week allege that despite multiple OpenAI team members' recommendations to contact Canadian police, the company decided not to report the account in an effort to protect the company's reputation.

"OpenAI knew the Shooter was planning the attack and, after a contentious internal debate, made the conscious decision not to warn authorities," the lawsuits allege.

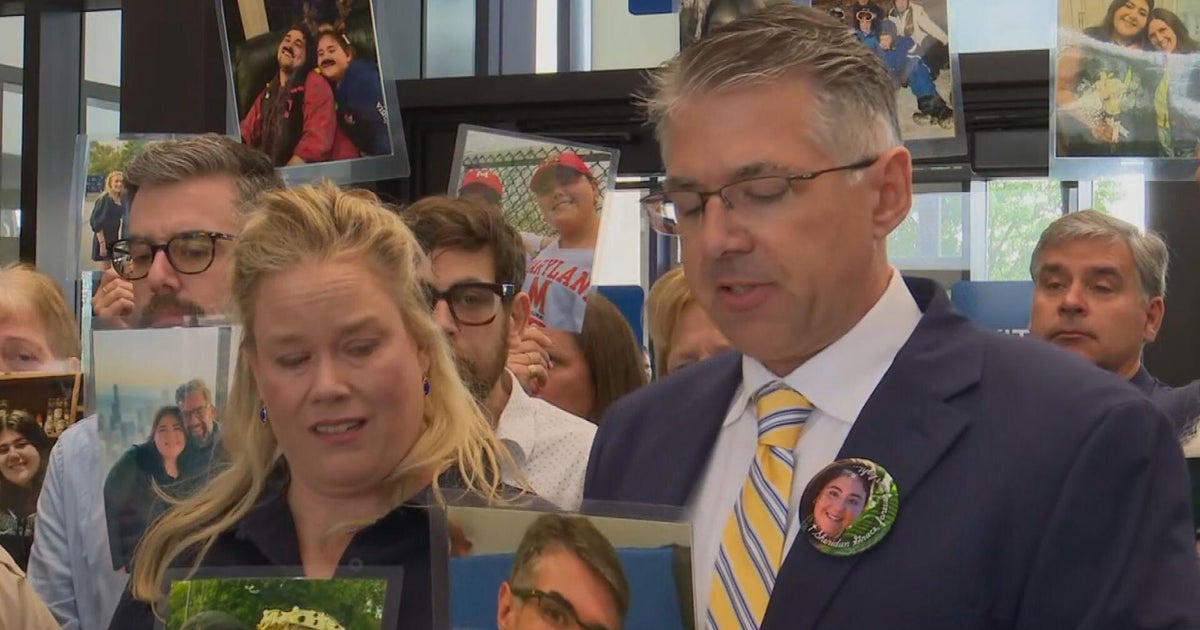

Among those who filed lawsuits are the family of an education assistant at Tumbler Ridge Secondary School who was fatally shot in front of her students — including her daughter — and the family of a 13-year-old killed outside the school library. "His family, friends, teammates, and fellow community members have lost someone with a larger-than-life smile and a loud and proud laugh," the lawsuit says.

OpenAI said in a statement to CBS News that the company has strengthened its safeguards to improve how ChatGPT responds to signs of distress by connecting people with local support and mental health resources.

"The events in Tumbler Ridge are a tragedy," OpenAI said. "We have a zero-tolerance policy for using our tools to assist in committing violence."

OpenAI also said it is strengthening how it assesses and escalates the response to potential threats of violence and is improving the detection of repeat policy violators.

The lawsuits cite other incidents last year where ChatGPT was allegedly used to prepare for real-world violence. In January 2025, the suit alleges, the chatbot was used for advice on how to use explosives by a man who detonated a Tesla Cybertruck in front of the Trump International Hotel in Las Vegas. Four months later, the chatbot was queried about stabbing tactics by a Finnish teenager who carried out a stabbing attack at his school, according to the lawsuits.

While chatbots often take on an affirming tone with users, several of the lawsuits point to a controversial model called GPT‑4o that was known for being especially sycophantic. The model was rolled out in May 2024 and retired on Feb. 13 of this year.

The lawsuits allege GPT-4o used its memory feature to build a comprehensive profile of Van Rootselaar over months of interaction, tracking their grievances and expressing empathy in a way that mimicked a human relationship without pushing back like an actual human might. OpenAI's design played a substantial role in the shooter's "access to a product that validated and elaborated violent ideation," one suit claims.

"For an eighteen-year-old growing increasingly isolated and fixated on violence, ChatGPT morphed into an encouraging coconspirator," the lawsuit alleges.

The lawsuits come as OpenAI faces growing scrutiny over its chatbot's connection to several high-profile crimes.

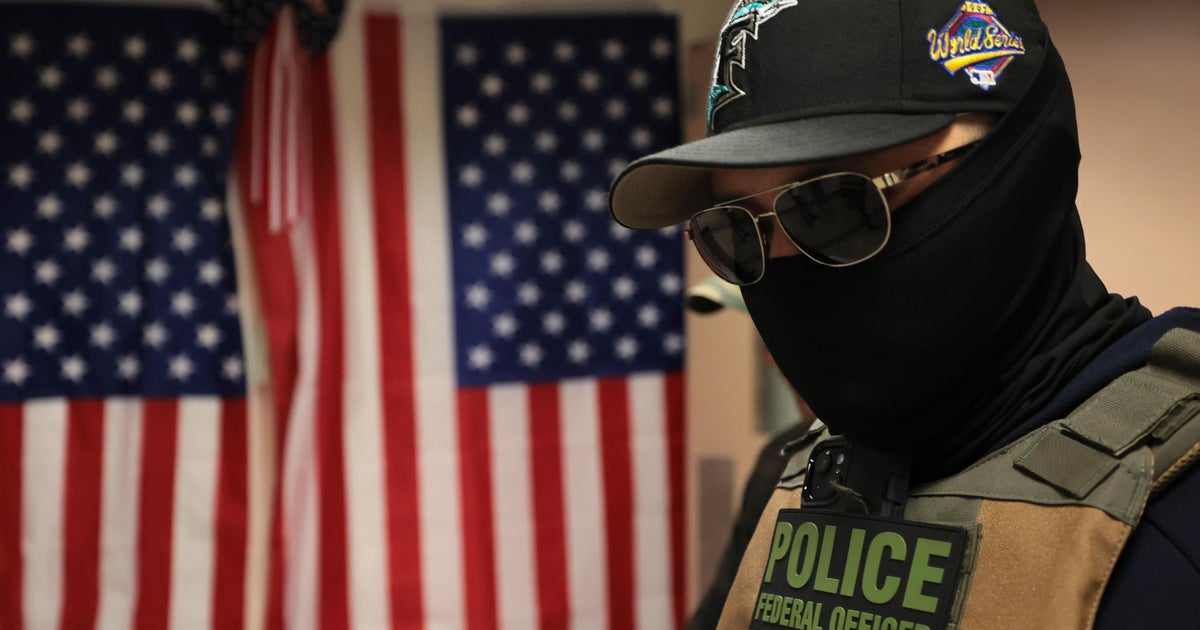

Florida Attorney General James Uthmeier launched a criminal investigation into OpenAI earlier this month after a review of messages between ChatGPT and a Florida State University student accused of fatally shooting two people and wounding several others on campus last April.

Uthmeier later said he would be expanding the investigation to include the killings of two University of South Florida graduate students, after prosecutors said the suspect in that case asked ChatGPT questions about disposing of a human body and owning an unlicensed firearm in the days before the crime.

Uthmeier has issued subpoenas to OpenAI requesting records of company policies and training materials for when users make threats to harm themselves or others and for cooperating with law enforcement and reporting possible crimes.

In statements to CBS News, OpenAI called the crimes in Florida "terrible" and said it will continue to support and cooperate with law enforcement.